Using Mobile VR to Assess Claustrophobia During an MRI

new methods for exposure therapy

Stephanie O’Malley

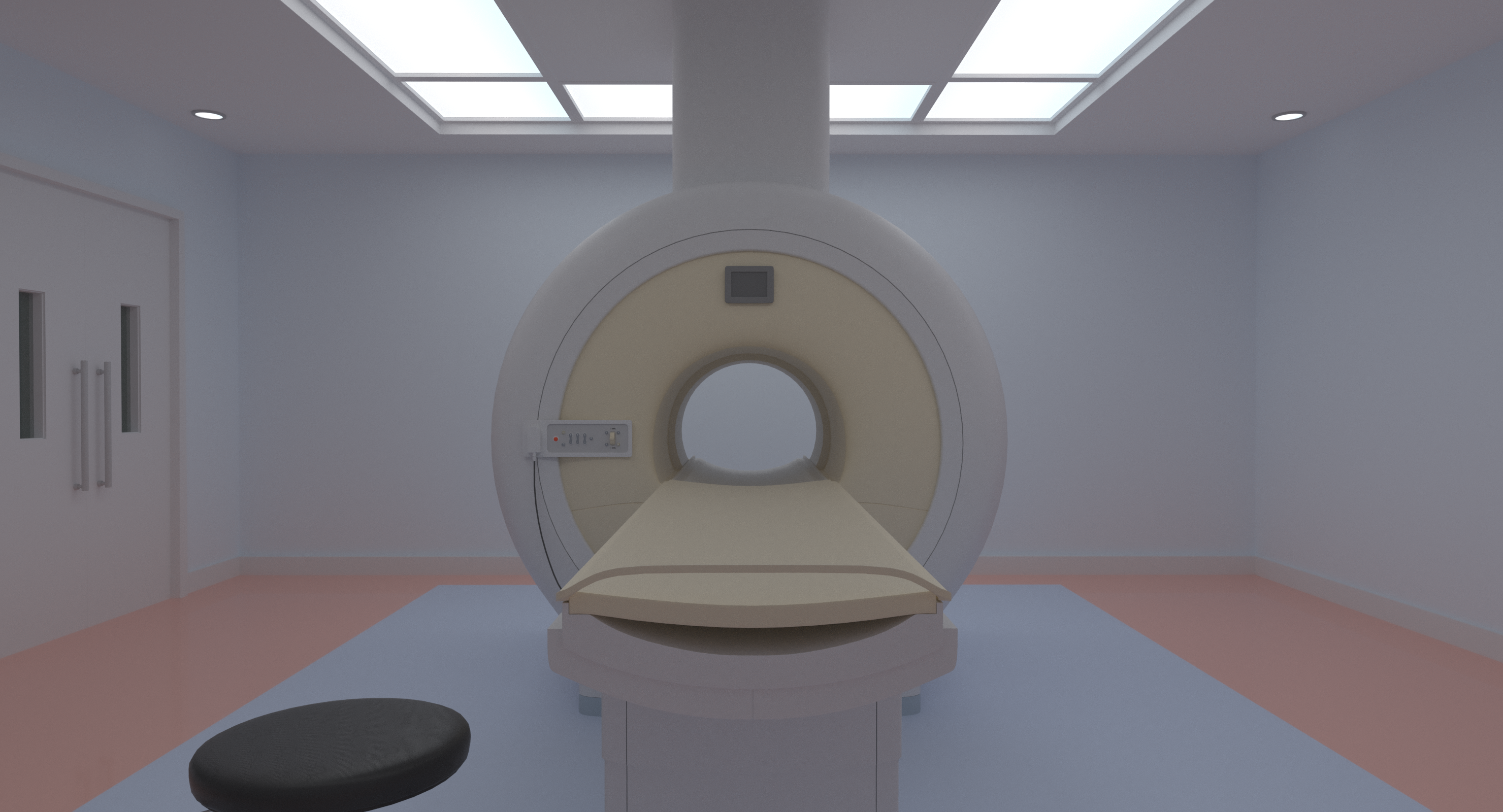

Dr. Richard Brown and his colleague Dr. Jadranka Stojanovska had an idea for how VR could be used in a clinical setting. Having realized a problem with patients undergoing MRI scans experiencing claustrophobia, they wanted to use VR simulations to introduce potential patients to what being inside an MRI machine might feel like.

Claustrophobia in this situation is a surprisingly common problem. While there are 360 videos that convey what an MRI might look like, these fail to address the major factor contributing to claustrophobia: The perceived confined space within the bore. 360 videos tend to make the environment skewed, seeming further away than it would be in reality and thereby failing to induce the same feelings of claustrophobia that the MRI bore would produce in reality. With funding from the Patient Education Award Committee, Dr. Brown approached the Duderstadt Center to see if a better solution could be produced.

In order to simulate the effects of an MRI accurately, a CGI MRI machine was constructed and ported to the Unity game engine. A customize-able avatar representing the viewer’s body was also added to give viewers a sense of self. When a VR headset is worn, the viewer’s perspective allows them to see their avatar body and the real proportions of the MRI machine as they are slowly transported into the bore. Verbal instructions mimic what would be said throughout the course of a real MRI, with the intimidating boom of the machine occurring as the simulated scan proceeds.

Two modes are provided within the app: Feet first or head first, to accommodate the most common scanning procedures that have been shown to induce claustrophobia.

In order to make this accessible to patients, the MRI app was developed with mobile VR in mind, allowing anyone (patients or clinicians) with a VR-capable phone to download the app and use it with a budget friendly headset like Google Daydream or Cardboard.

Dr. Brown’s VR simulator was recently featured as the cover story in the September edition of Tomography magazine.