Extended Reality: changing the face of learning, teaching, and research

Written by Laurel Thomas, Michigan News

Students in a film course can evoke new emotions in an Orson Welles classic by virtually changing the camera angles in a dramatic scene.

Any one of us could take a smartphone, laptop, paper, Play-doh and an app developed at U-M, and with a little direction become a mixed reality designer.

A patient worried about an upcoming MRI may be able put fears aside after virtually experiencing the procedure in advance.

This is XR—Extended Reality—and the University of Michigan is making a major investment in how to utilize the technology to shape the future of learning.

Recently, Provost Martin Philbert announced a three-year funded initiative led by the Center for Academic Innovation to fund XR, a term used to encompass augmented reality, virtual reality, mixed reality and other variations of computer-generated real and virtual environments and human-machine interactions.

The Initiative will explore how XR technologies can strengthen the quality of a Michigan education, cultivate interdisciplinary practice, and enhance a national network of academic innovation.

Throughout the next three years, the campus community will explore new ways to integrate XR technologies in support of residential and online learning and will seek to develop innovative partnerships with external organizations around XR in education.

“Michigan combines its public mission with a commitment to research excellence and innovation in education to explore how XR will change the way we teach and learn from the university of the future,” said Jeremy Nelson, new director of the XR Initiative in the Center for Academic Innovation.

Current Use of XR

In January 2018, a group of students created the Alternative Reality Initiative to provide a community for hosting development workshops, discussing industry news, and connecting students in the greater XR ecosystem.

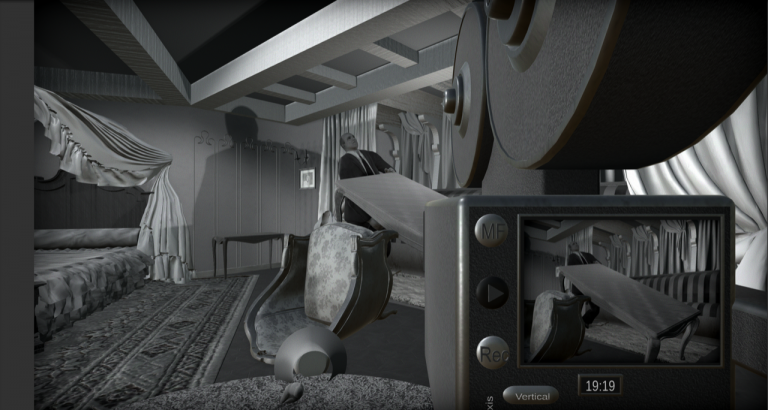

In Matthew Solomon‘s film course, students can alter a scene in Orson Welles’ classic “Citizen Kane.” U-M is home to one of the largest Orson Welles collections in the world.

Solomon’s concept for developers was to take a clip from the movie and model a scene to look like a virtual reality setting—almost like a video game. The goal was to bring a virtual camera in the space so students could choose shot angles to change the look and feel of the scene.

This VR tool will be used fully next semester to help students talk about filmmaker style, meaning and choice.

“We can look at clips in class and be analytical but a tool like this can bring these lessons home a little more vividly,” said Solomon, associate professor in the Department of Film, Television and Media.

Sara Eskandari, who just graduated with a Bachelor of Arts from the Penny Stamps School of Art and a minor in Computer Science, helped develop the tool for Solomon’s class as a member of the Visualization Studio team.

“I hope students can enter an application like ‘Citizen Kane’ and feel comfortable experimenting, iterating, practicing, and learning in a low-stress environment,” Eskandari said. “Not only does this give students the feeling of being behind an old-school camera, and supplies them with practice footage to edit, but the recording experience itself removes any boundaries of reality.

“Students can float to the ceiling to take a dramatic overhead shot with the press of a few buttons, and a moment later record an extreme close up with entirely different lighting.”

Sara Blair, vice provost for academic and faculty affairs, and the Patricia S. Yaeger Collegiate Professor of English Language and Literature, hopes to see more projects like “Citizen Kane.”

“An important part of this project, which will set it apart from experiments with XR on many other campuses, is our interest in humanities-centered perspectives to shape innovations in teaching and learning at a great liberal arts institution,” Blair said. “How can we use XR tools and platforms to help our students develop historical imagination or to help students consider the value and limits of empathy, and the way we produce knowledge of other lives than our own?

“We hope that arts and humanities colleagues won’t just participate in this [initiative] but lead in developing deeper understandings of what we can do with XR technologies, as we think critically about our engagements with them.”

UM Faculty Embracing XR

Mark W. Newman, professor of information and of electrical engineering, and chair of the Augmented and Virtual Reality Steering Committee, said indications are that many faculty are thinking about ways to use the technology in teaching and research, as evidenced by progress on an Interdisciplinary Graduate Certificate Program in Augmented and Virtual Reality.

Newman chaired the group that pulled together a number of faculty working in XR to identify the scope of the work under way on campus, and to recommend ways to encourage more interdisciplinary collaboration. He’s now working with deans and others to move forward with the certificate program that would allow experiential learning and research collaborations on XR projects.

“Based on this, I can say that there is great enthusiasm across campus for increased engagement in XR and particularly in providing opportunities for students to gain experience employing these technologies in their own academic work,” Newman said, addressing the impact the technology can have on education and research.

“With a well-designed XR experience, users feel fully present in the virtual environment, and this allows them to engage their senses and bodies in ways that are difficult if not impossible to achieve with conventional screen-based interactive experiences. We’ve seen examples of how this kind of immersion can dramatically aid the communication and comprehension of otherwise challenging concepts, but so far we’re only scratching the surface in terms of understanding exactly how XR impacts users and how best to design experiences that deliver the effects intended by experience creators.

Experimentation for All

Encouraging everyone to explore the possibilities of mixed reality (MR) is a goal of Michael Nebeling, assistant professor in the School of Information, who has developed unique tools that can turn just about anyone into an augmented reality designer using his ProtoAR or 360proto software.

Most AR projects begin with a two-dimensional design on paper that are then made into a 3D model, typically by a team of experienced 3D artists and programmers.

With Nebeling’s ProtoAR app content can be sketched on paper, or molded with Play-doh, then the designer either moves the camera around the object or spins the piece in front of the lens to create motion. ProtoAR then blends the physical and digital content to come up with various AR applications.

Using his latest tool, 360proto, they can even make the paper sketches interactive so that users can experience the AR app live on smartphones and headsets, without

spending hours and hours on refining and implementing the design in code.

These are the kind of technologies that not only allow his students to learn about AR/VR in his courses, but also have practical applications. For example, people can

experience their dream kitchen at home, rather than having to use their imaginations when clicking things together on home improvement sites. He also is working on getting many solutions directly into future web browsers so that people can access AR/VR modes when visiting home improvement sites, cooking a recipe in the kitchen, planning a weekend trip with museum or gallery visits, or when reading articles on wikipedia or the news.

Nebeling is committed to “making mixed reality a thing that designers do and users want.”

“As a researcher, I can see that mixed reality has the potential to fundamentally change the way designers create interfaces and users interact with information,” he said. “As a user of current AR/VR applications, however, it’s difficult to see that potential even for me.”

He wants to enable a future in which “mixed reality will be mainstream, available and accessible to anyone, at the snap of a finger. Where everybody will be able to ‘play,’ be it as consumer or producer.”

XR and the Patient Experience

“The collaboration with the Duderstadt team has enabled us to develop a cutting-edge tool that allows patients to truly experience an MRI before having a scan,” said Dr. Richard K.J. Brown, professor of radiology. The patient puts on a headset and is ‘virtually transported’ into an MRI tube. A calming voice explains the MRI exam, as the patient hears the realistic sounds of the magnet in motion, simulating an exam experience.

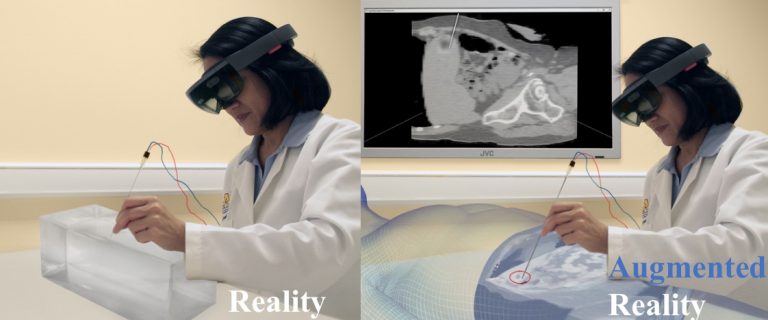

The team also is developing an Augmented Reality tool to improve the safety of CT-guided biopsies.

Team members include doctors Brown, Jadranka Stojanovska, Matt Davenport, Ella Kazerooni, Elaine Caoili from Radiology, and Dan Fessahazion, Sean Petty, Stephanie O’Malley,Theodore Hall and several students from the Visualization Studio.

“The Duderstadt Center and the Visualization Studio exists to foster exactly these kinds of collaborations,” said Daniel Fessahazion, center’s associate director for emerging technologies. “We have a deep understanding of the technology and collaborate with faculty to explore its applicability to create unique solutions.”

AI’s Nelson said the first step of this new initiative is to assess and convene early innovators in XR from across the university to shape how this technology may best support serve residential and online learning.

“We have a unique opportunity with this campus-wide initiative to build upon the efforts of engaged students, world-class faculty, and our diverse alumni network to impact the future of learning,” he said.