Fall 2024 XR Classes

Looking for Classes that incorporate XR?

EECS 440 – Extended Reality for Social Impact (Capstone / MDE)

More Info Here

Contact with Questions:

Austin Yarger

ayarger@umich.edu

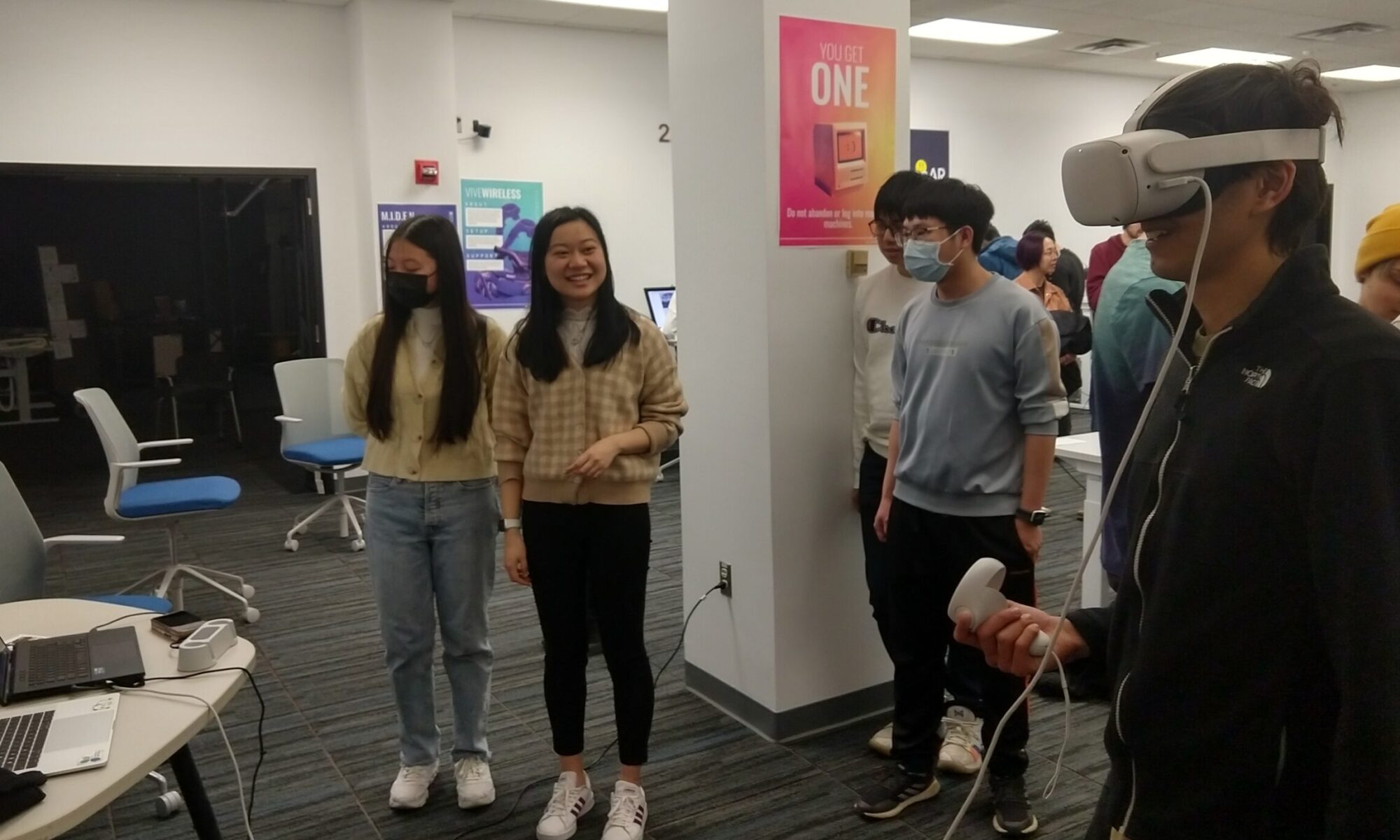

Extended Reality for Social Impact — Design, development, and application of virtual and augmented reality software for social impact. Topics include: virtual reality, augmented reality, game engines, ethics / accessibility, interaction design patterns, agile project management, stakeholder outreach, XR history / culture, and portfolio construction. Student teams develop and exhibit socially impactful new VR / AR applications.

ENTR 390.005 & 390.010 – Intro to Entrepreneurial Design, VR Lab

More Info Here

Contact with Questions:

Sara ‘Dari’ Eskandari

seskanda@umich.edu

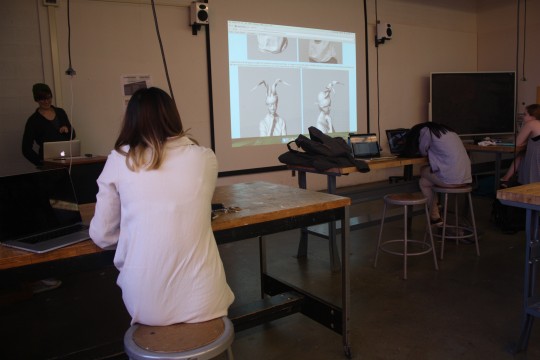

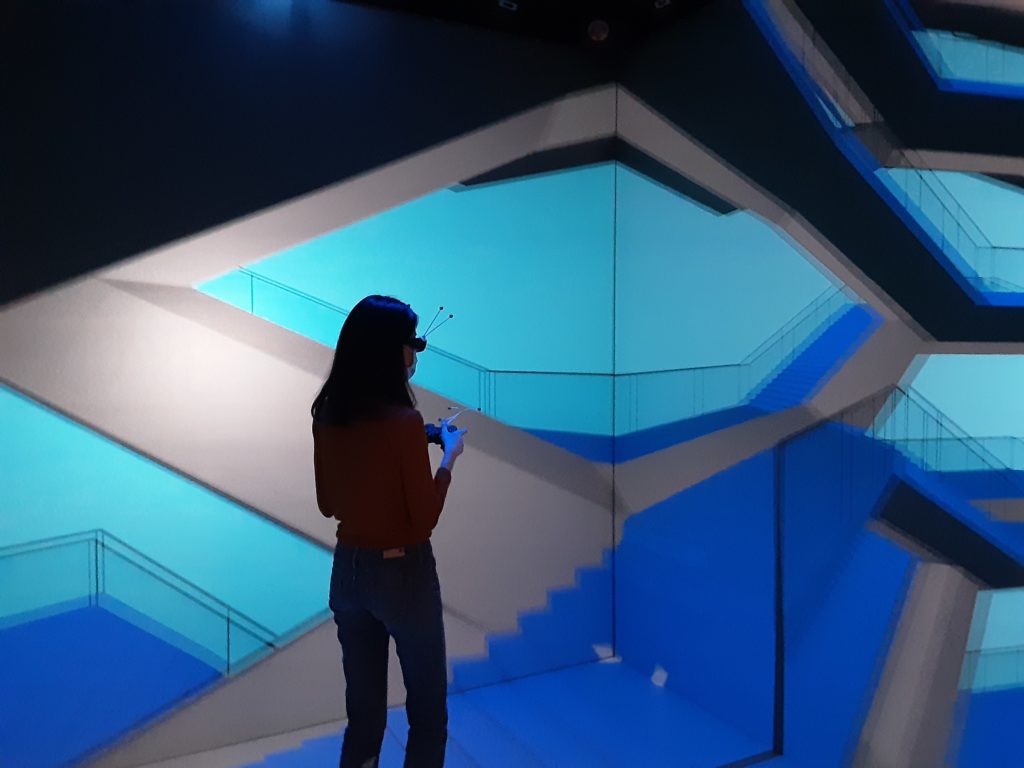

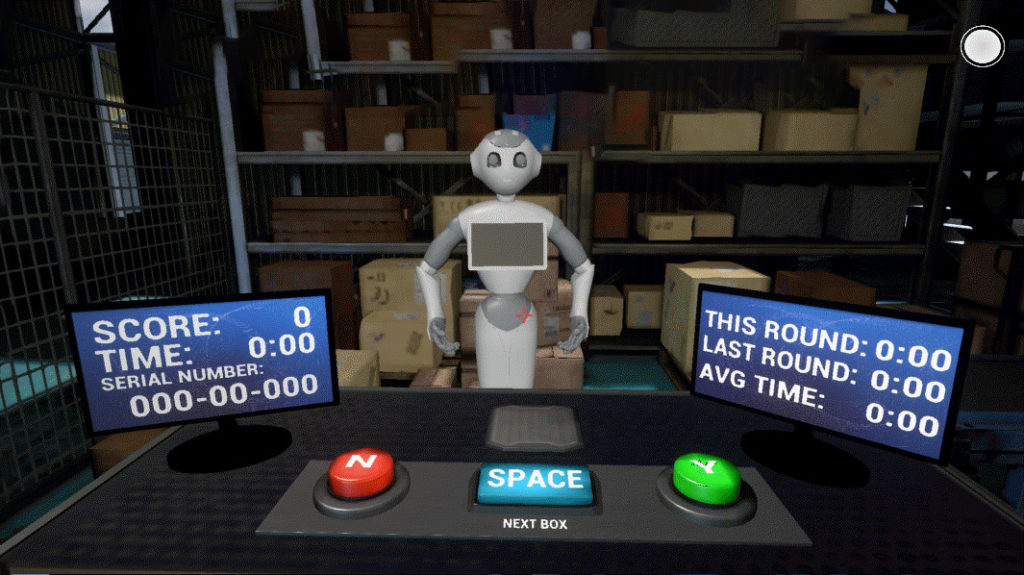

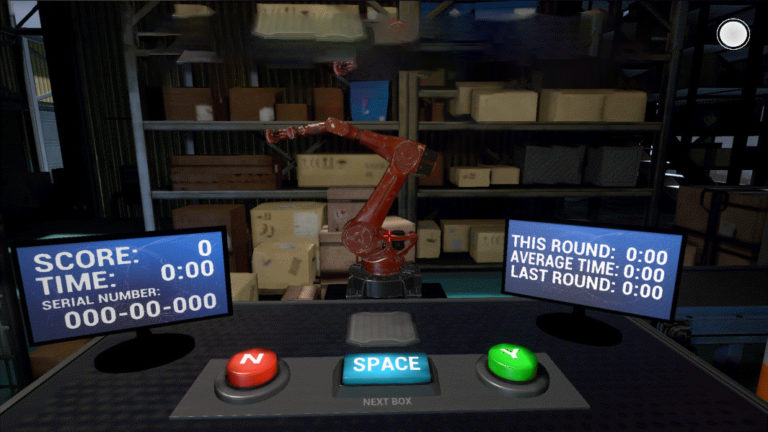

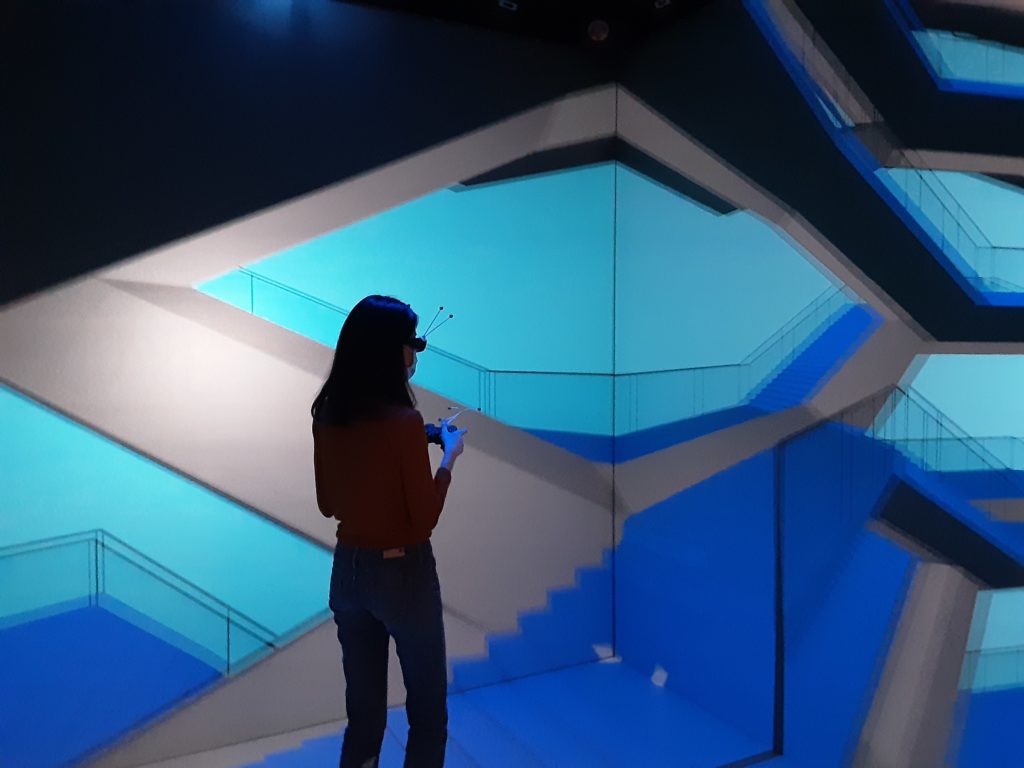

In this lab, you’ll learn how to develop virtual reality content for immersive experiences in the Meta Quest, MIDEN or for Virtual Production using Unreal Engine and 3d modeling software. You’ll also be introduced to asset creation and scene assembly by bringing assets into the Unreal Engine & creating interactive experiences. At the end of the class you’ll be capable of developing virtual reality experiences, simulations, and tools to address real-world problems.

Students will have an understanding of how to generate digital content for Virtual Reality platforms; be knowledgeable on versatile file formats, content pipelines, hardware platforms and industry standards; understand methods of iterative design and the creation of functional prototypes using this medium; employ what is learned in the lecture section of this course to determine what is possible, what is marketable, and what are the various distribution methods available within this platform; become familiar with documenting their design process and also pitching their ideas to others, receiving and providing quality feedback.

UARTS 260 – Empathy in Pointclouds

More Info Here

Contact with Questions:

Dawn Gilpin

dgilpin@umich.edu

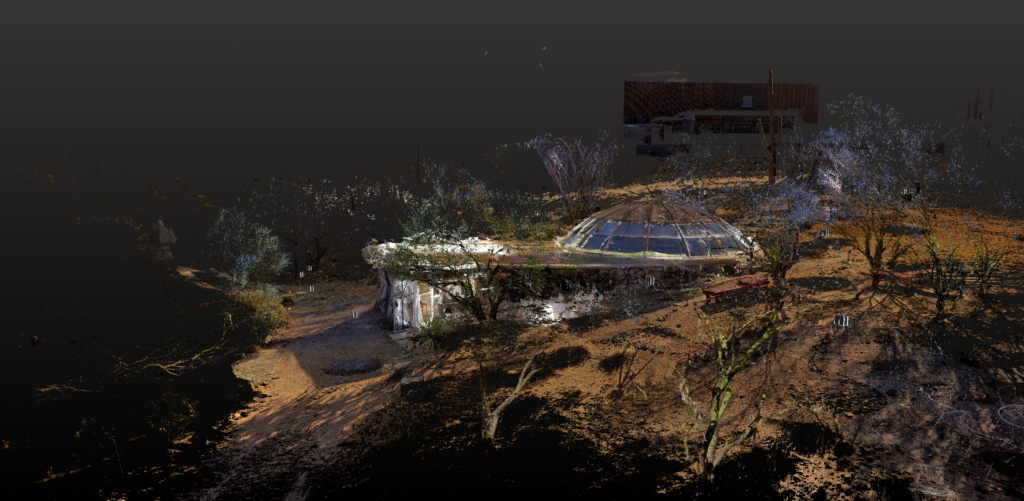

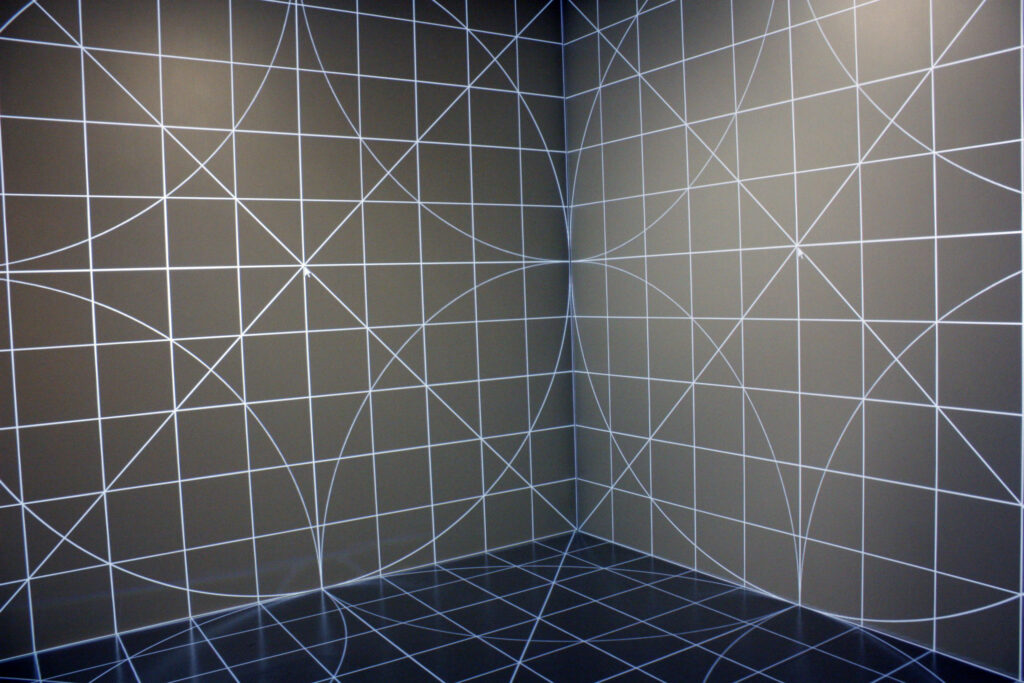

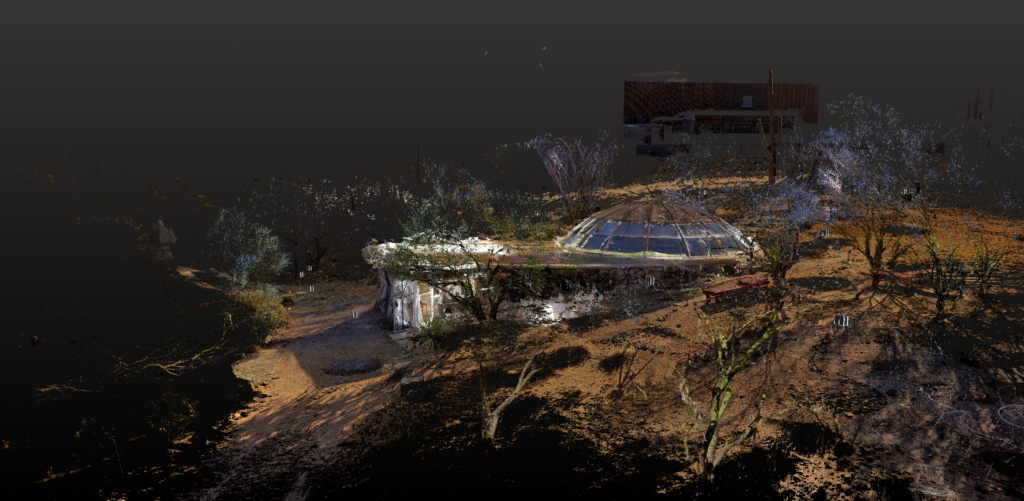

Empathy In Point Clouds: Spatializing Design Ideas and Storytelling through Immersive Technologies integrates LiDAR scanning, photogrammetry, and Unreal Engine into education, expanding the possible methodologies and processes of architectural design. Entering our third year of the FEAST program, we turn our attention to storytelling and worldbuilding using site-specific point cloud models as the context for our narratives. This year the team will produce 1-2 spatial narratives for the three immersive technology platforms we are working with: Meta Quest VR headset, MiDEN/VR CAVE, and the LED stage.

ARTDES 217 – Bits and Atoms

More Info Here

Contact with Questions:

Sophia Brueckner

sbrueckn@umich.edu

This is an introduction to digital fabrication within the context of art and design. Students learn about the numerous types of software and tools available and develop proficiency with the specific software and tools at Stamps. Students discuss the role of digital fabrication in creative fields.

ARTDES 420 – Sci-Fi Prototyping

More Info Here

Contact with Questions:

Sophia Brueckner

sbrueckn@umich.edu

This course ties science fiction with speculative/critical design as a means to encourage the ethical and thoughtful design of new technologies. With a focus on the creation of functional prototypes, this course combines the analysis of science fiction with physical fabrication or code-based interpretations of the technologies they depict.

SI 559 – Introduction to AR/VR Application Design

More Info Here

Contact with Questions:

Michael Nebeling

nebeling@umich.edu

This course will introduce students to Augmented Reality (AR) and Virtual Reality (VR) interfaces. This course covers basic concepts; students will create two mini-projects, one focused on AR and one on VR, using prototyping tools. The course requires neither special background nor programming experience.

FTVM 394 / DIGITAL 394 – Topics in Digital Media Production, Virtual Reality

More Info Here

Contact with Questions:

Yvette Granata

ygranata@umich.edu

This course provides an introduction to key software tools, techniques, and fundamental concepts supporting digital media arts production and design. Students will learn and apply the fundamentals of design and digital media production with software applications, web-based coding techniques and study the principals of design that translate across multiple forms of media production.

UARTS 260/360/460/560 – THE BIG CITY: Lost & Found in XR

More Info Here

Contact with Questions:

Matthew Solomon & Sara Eskandari

mpsolo@umich.edu / seskanda@umich.edu

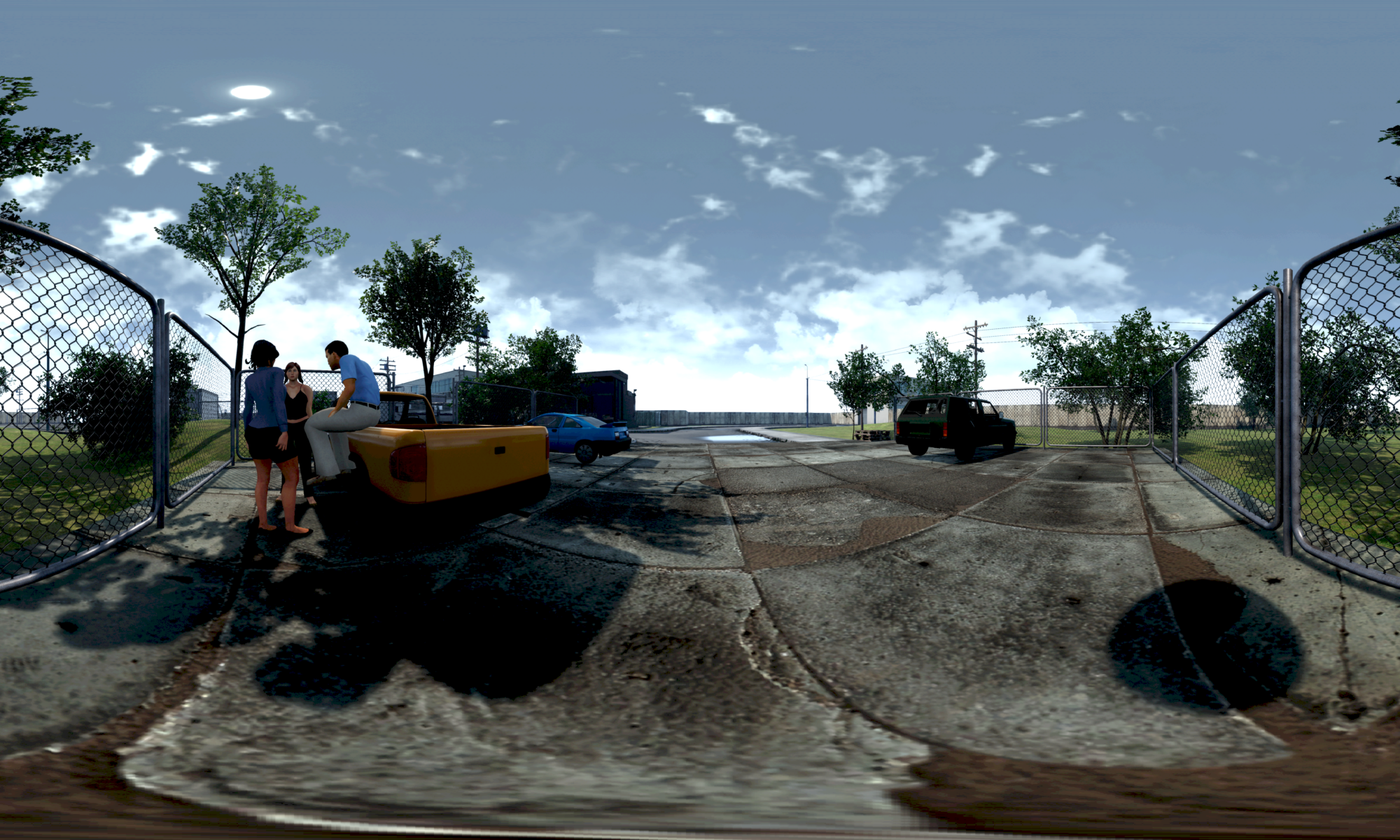

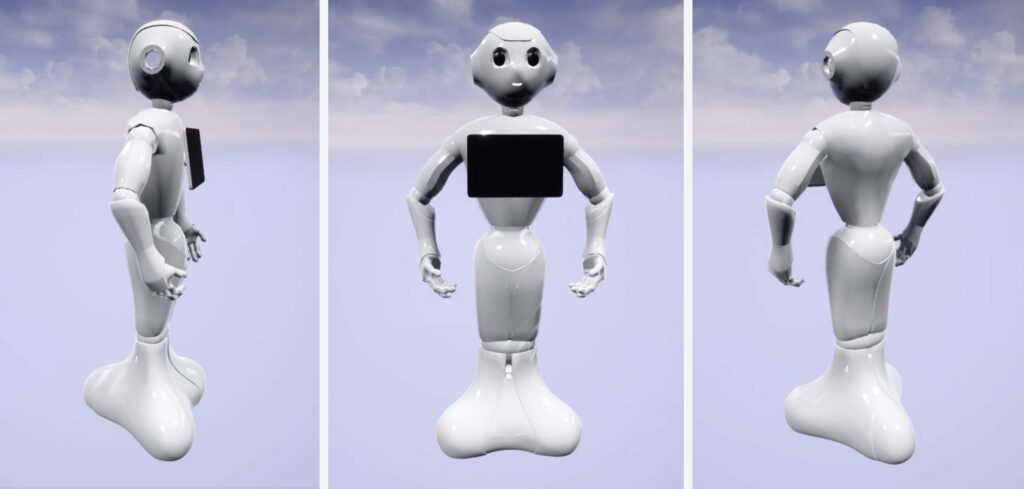

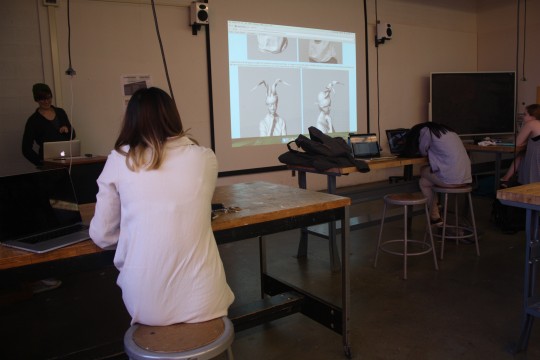

No copies are known to exist of 1928 lost film THE BIG CITY, only still photographs, a cutting continuity, and a detailed scenario of the film. This is truly a shame because the film featured a critical mass of black performers — something extremely uncommon at the time. Using Unreal Engine, detailed 3D model renderings, and live performance, students will take users back in time into the fictional Harlem Black Bottom cabaret and clubs shown in the film. Students will experience working in a small game development team to create a high-fidelity, historical recreation of the sets using 3D modeling, 2D texturing skills, level design, and game development pipelines. They will experience a unique media pipeline of game design for live performance and cutting-edge virtual production. This project will also dedicate focus towards detailed documentation in order to honor the preservation of THE BIG CITY that allows us to attempt this endeavor and the black history that fuels it.

MOVESCI 313 – The Art of Anatomy

Contact with Questions:

Melissa Gross & Jenny Gear

mgross@umich.edu / gearj@umich.edu

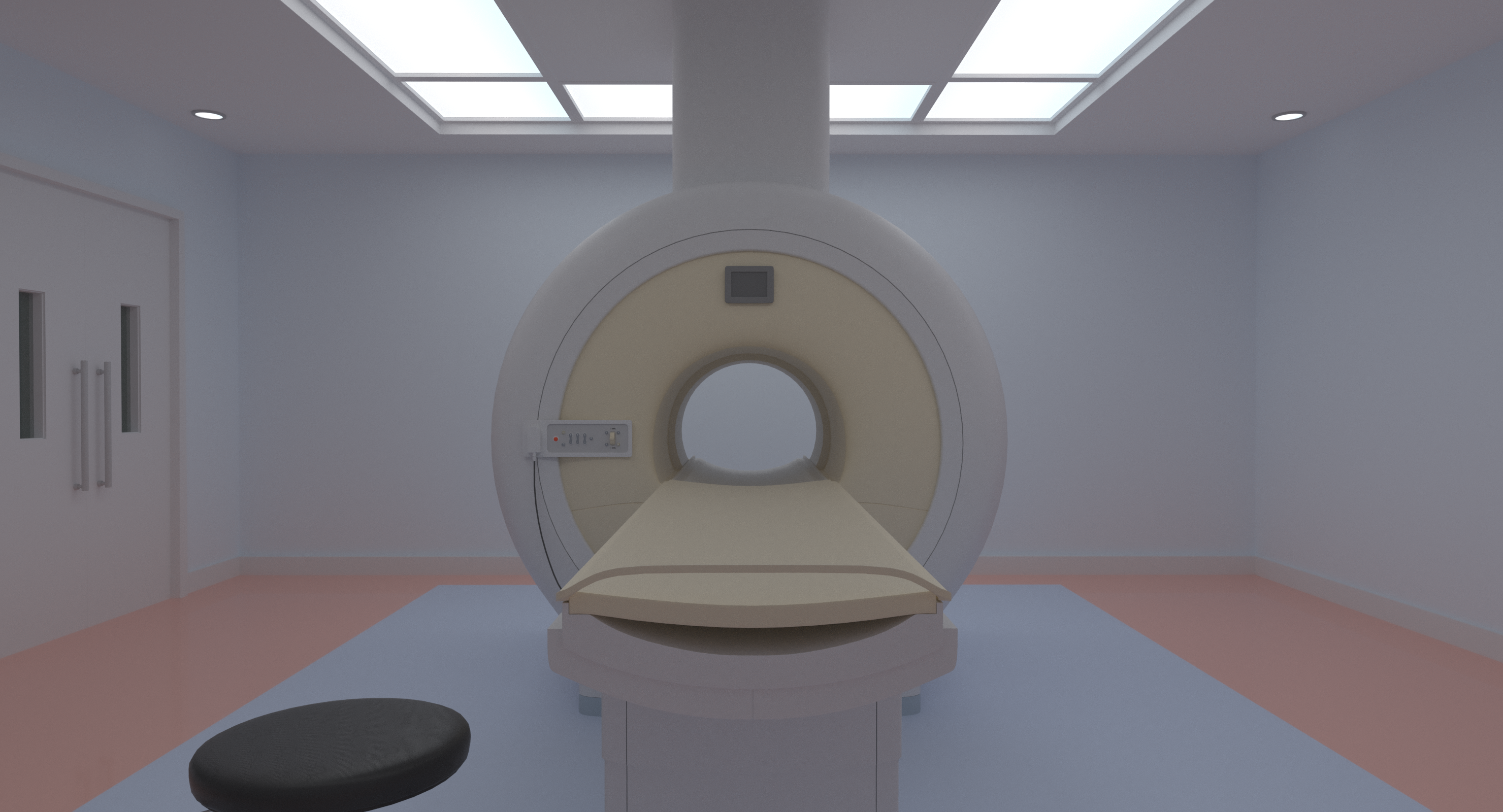

Learn about human anatomy and how it has historically been taught through human history covering a variety of mediums including the recent adoption of XR tools. Students will get hands-on experience with integrating and prototyping AR and VR Visualization technologies for medical and anatomical study.

ARCH 565 – Research in Environmental Technology

Contact with Questions:

Mojtaba Navvab

moji@umich.edu

The focus of this course is the introduction to research methods in environmental technology. Qualitative and quantitative research results are studied with regard to their impact on architectural design. Each course participant undertakes an investigation in a selected area of environmental technology. The experimental approach may use physical modeling, computer simulation, or other appropriate methods (VR).

FTVM 455.004 – Topics in Film: Eco Imaginations

WGS 412.001 – Fem Art Practices

Contact with Questions:

Petra Kuppers

petra@umich.edu

These courses will include orientations to XR technologies and sessions leveraging Unreal Engine and Quixel 3d assets to create immersive virtual reality environments.